When I was ten years old I visited the ruins in Cornwall where King Arthur had been conceived and born, at least in legend. There at Tintagel Castle, surrounded by the ocean air and the jagged rocks, I separated from my mother and sister and made my way down to the beach. And on the beach of Tintagel, right near Merlin’s cave, I spotted it in the sand. A stone. But not just any stone—a stone ax head. It could have been nothing else. It was shaped just like an ax, being unnaturally thick at the head, which was a smoothed blunt blade, and with near right angles it tapered to a point at the back. There was a notched cleft down the middle, to tie it to a shaft. As a 10-year-old boy in a place already dreamy with legend, I pocketed it, for I felt the Neolithic ax had come to me specifically, as if a Lady of the Lake had tossed it ashore. I am looking at it on my desk now.

Later I learned that such Neolithic axes are not so rare—much like ancient Roman coins, they were mass-produced, and you can buy them on eBay cheaply due to finds like mine. This one from Tintagel beach is a perfect specimen, although the ocean likely washed away its provenance. But on that day, even amid all the other sandy stones, it stood out to me immediately. I knew it was a tool instinctively, the way a baby knows the nipple.

The philosopher Henri Bergson wrote that:

We should say not Homo sapiens, but Homo faber.

Homo faber means “man the maker.” For if anything defines humans, it is tool use. I know it is now standard, in our rush to dethrone humanity, to play up that other animals also sometimes use tools. But unlike other animals, tools are our evolutionary niche. We have been making stone tools for at least 3.3 million years. We co-evolved with tools. At first, we made them from wood and bone and stone; later we began to craft abstract tools too. Language is a tool. Math is a tool. All of our vaunted cognition is, in some sense, a tool for a more protean mental firmament, which probably is consciousness itself. Heidegger’s term for this aspect of our consciousness was Zuhandenheit: “readiness to hand.”

Now, we live in an age of tools that can talk back to us. When ChatGPT and the other LLMs appeared on the scene, and I first typed into a chat window, I experienced amazement. It was the legendary Turing Test, and I was living it!

REPENT, THE SINGULARITY IS NIGH!

Do not doubt that we are at the absolute peak of the AI hype cycle. In monetary terms, investment cannot actually go on increasing at these rates without leading to basically impossible numbers. A major falling out between the DoD and Anthropic dominates the news (even amid war).

In the months leading up to all this, a bunch of commentators have jumped aboard the bandwagon of AI hype: The Wall Street Journal advises to “Brace yourself for the AI Tsunami” and The New York Times is saying that AI might completely change the fundamentals of human existence. Popular bloggers are writing that “AI can already do social science research better than most professors,” and that “the humanities are about to be automated” and that “superintelligence is already here.”

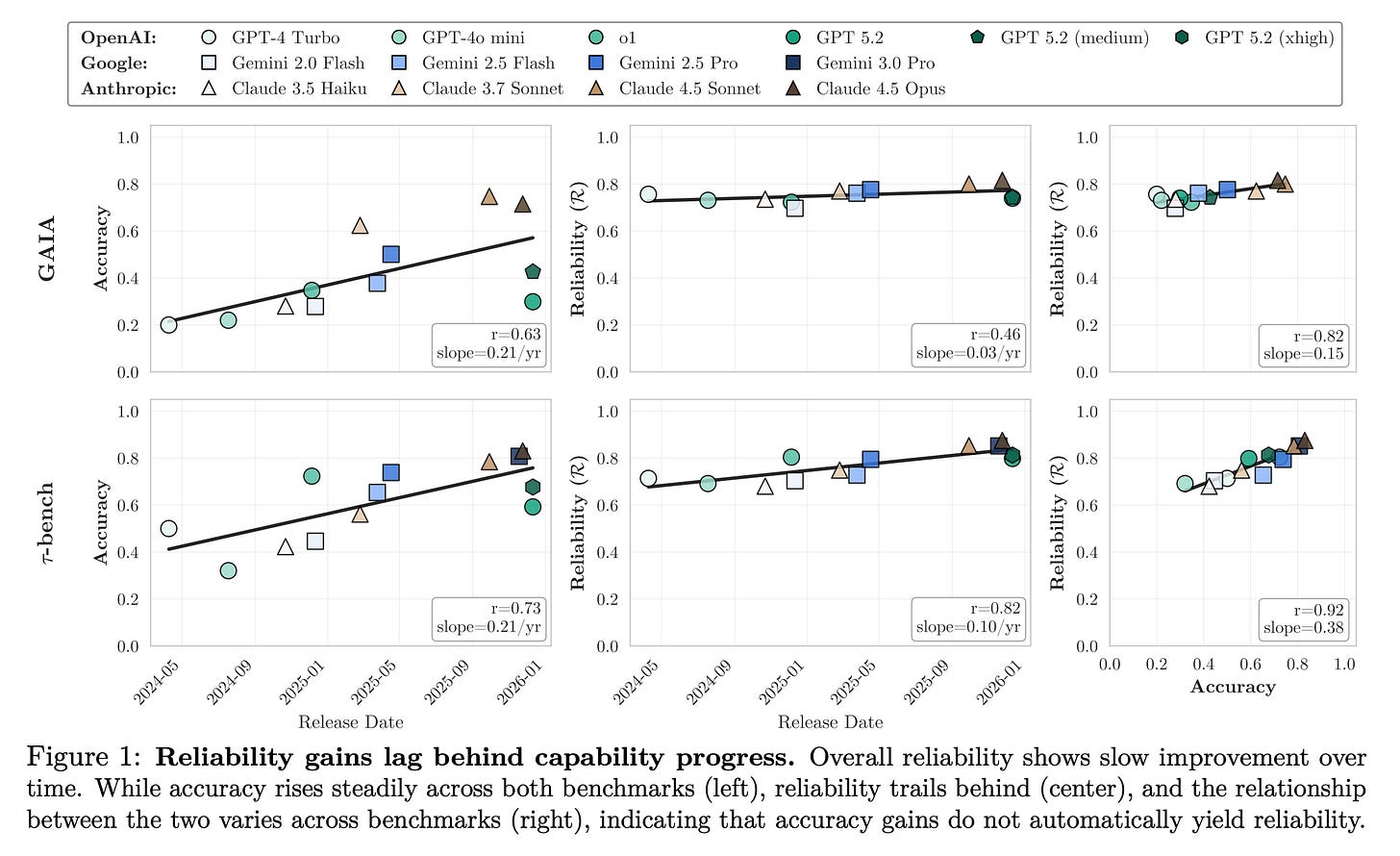

Meanwhile, fictional doomsday reports from the year 2028 go hyper-viral and impact the stock market as the authors imagine human unemployment leading to an endless great depression. The infamous METR graph continues to accelerate (and everyone ignores that METR tasks are a tiny sample size for a slim number of domain-specific programming tasks, all based on a dubious analogy to human-time-spent-on-task, and some of the authors have tried to downplay it because their graph has been so misused). There are usually many charts involved in the Great AI Debate. I could show you charts too! Like that, despite improvements on benchmarks, the actual reliability of AI is on an almost-flat trajectory for many tasks.

But others can then show other charts, and so on, ad infinitum. I don’t think you can decide the future by a couple of charts.

No, there’s a better form of argument about AI, one which I am finally comfortable making: the argument from experience. There simply has been enough time now to see clearly how LLMs transformed the intellectual work of writing, and how this reflects their fundamental nature. My proposal is that we simply extrapolate what has happened to text production to all the other intellectual domains LLMs will ever touch.

For if everything that anyone can do on a computer is soon to be automated (as Andrew Yang is now preaching will happen in the next 12-18 months), then this process should have started with writing years ago. Yet, beyond mass-producing stilted emails and stilted social media posts and stilted essays, the impact of LLMs on writing itself has not really been to improve or accelerate good writing overall. We are not in a glut of good writing. We are in a dearth of it. This is surprising and counterintuitive, because for an LLM, words are its womb, its mother, its literal atoms—yet their impact on writing as a whole has been mostly to generate mountains of slop, while, on the positive side, helping with efficiency and research and editing and feedback, all things that only marginally improve already-good pieces. There are no signs of a burgeoning “text singularity” seen in the words output by our civilization, and words are the most sensitive weathervane to AI capabilities.

If LLMs were a true source of intelligence to rival humans, then discovering them should be like discovering oil. And if we were climbing the curve of an intelligence explosion their surplus intellect would be improving our civilization’s text as a whole in noticeable ways. If LLMs are tools, then we should expect their impacts to be a mirror of us, and concern efficiency and scale, rather than quality, and depend strongly on how people use them.

So let me ask you: if you took an observer from 2016 and teleported them a decade ahead to our time, and then showed them your social media feed or your emails and other media in general, what would their main response be? Would it be “Wow, everything is more intelligent now!” Or would it be “Why is everyone writing like a pod person now?”

It’s been six years since GPT-3, and there has been no “move 37” moment for writing (as there was for AlphaGo’s creative play of Go). Not even close.

HECK, LET’S LOWER THE BAR

If you ask a leading AI to write a children’s book, you’ll see that AI has not demonstrated exponential improvements at whatever amorphous and hard-to-define (but very real) skill “children’s book authorship” is. Indeed, the upper bound of the needle hasn’t moved much for writing in general, as people forget that older models like GPT-3 were already extremely good at short sprints of text when they kept it together. LLMs have been able to write a passable approximation of a children’s book for almost half a decade… and yet, the real lesson from this is that approximation is not automation.

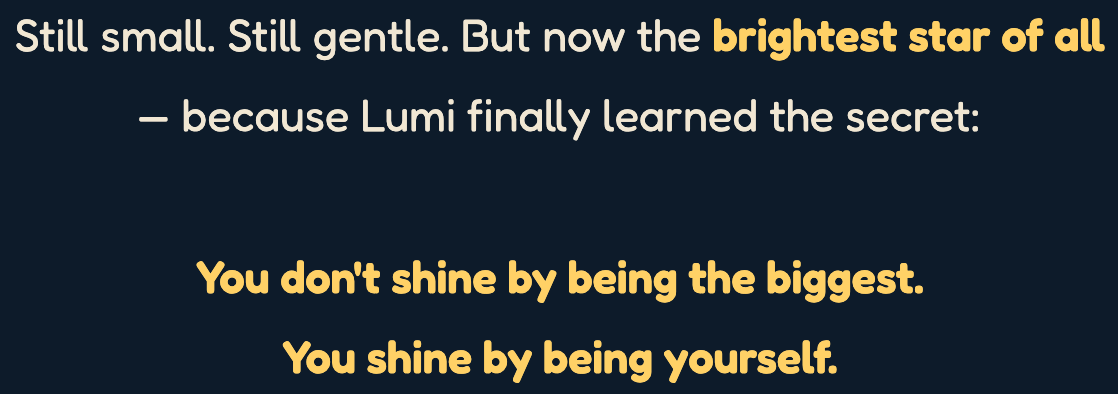

Below is Anthropic’s latest model (arguably the smartest AI in existence) trying to write a good children’s book, by itself, without guidance and hand-holding. The result, which it called “beautiful,” was exactly what a smiling alien would write if it had never interacted with a child before. It was a “blurry jpeg” of a children’s book. Here’s the sappy ending:

gag me with a spoon

People love to onanistically declare that the “it’s just autocomplete” or the “stochastic parrot” criticism is soooo outdated and soooo stupid.

And yet… isn’t “You shine by being yourself” basically autocomplete for a children’s book? Can we admit that? Or do we have to pretend these insipid outputs are from a machine on the verge of artificial superintelligence (coming in literal months)? I simply refuse to play along. If you actually interact with them, and ask them to do things they aren’t directly trained on, these models remain spectacularly intellectually shallow and incompetent when there’s no detailed human prompting to give them clues and hints and guideposts. Charged with writing children’s literature independently, based on their own ideas and own attempts at style, an AI like Anthropic’s Claude will always draw from the same small well. The outputs still feel like an LLM to anyone with an ear for language, or an eye for content.

The entire life of the artist, indeed, the life of the mind in general, is defined by resistance to slop. If you want to write an actually good children’s book you’ve got to put your own perspective into it, and LLMs are “views from nowhere.” Consider how deep and complex good children’s books actually are. The Giving Tree is about unrequited parental sacrifice. The Velveteen Rabbit is about ontology. Madeline is about Paris-maxing. The Rainbow Fish? Communism. The Very Hungry Caterpillar? Metamorphosis. In Where the Wild Things Are, at the end of the book, the Wild Things try to eat Max. That’s a good children’s book.

(I was going to show a beautiful picture from Madeline here, as my daughter is obsessed with the “Pooh-pooh to the tiger in the zoo” scene. I took a photo of the book and asked the smartest-AI-in-existence-with-extended-thinking-on-at-the-highest-subscription-tier-available to make it look better. Presented are the results, in triptych.)

Consider AI video generation. “We’re going to automate Hollywood!” Okay, well, what happened to automating publishing? That’s a much simpler task, one that you’ve had well over half a decade to do. How’d that go? Hmm? Everyone on social media and at the companies simply declared victory (“Wow, this fan fiction looks good enough to me!”) and moved on to more capabilities, stuffing more reinforcement learning into the models, building more elaborate scaffolds, without actually getting it across the finish line for producing non-trivial and non-annoying and non-fluff text unless the model is being spoon-fed by a human. Like, you think people are going to craft highly-detailed prompts for their own personalized movies? Again, we can look at writing, where everything already played out. You can already prompt your way to personalized books! It just sucks and no one does it, because when you ask the LLM to operate independently, its ideas are mostly slop. This is why when a company like Anthropic says they’ve automated their code production, when really they mean they are still writing code, just at a slightly higher level of abstraction (and that’s why they still have over 100 open software developer positions, and why Boris Cherny, creator of Claude Code, said that “Engineering is changing and great engineers are more important than ever.”)

HAVE LLMS MADE BOOKS BETTER?

A paper looking at large-scale Amazon data quietly appeared earlier this year, asking “Have LLMs Boosted the Creation of Valuable Books?”

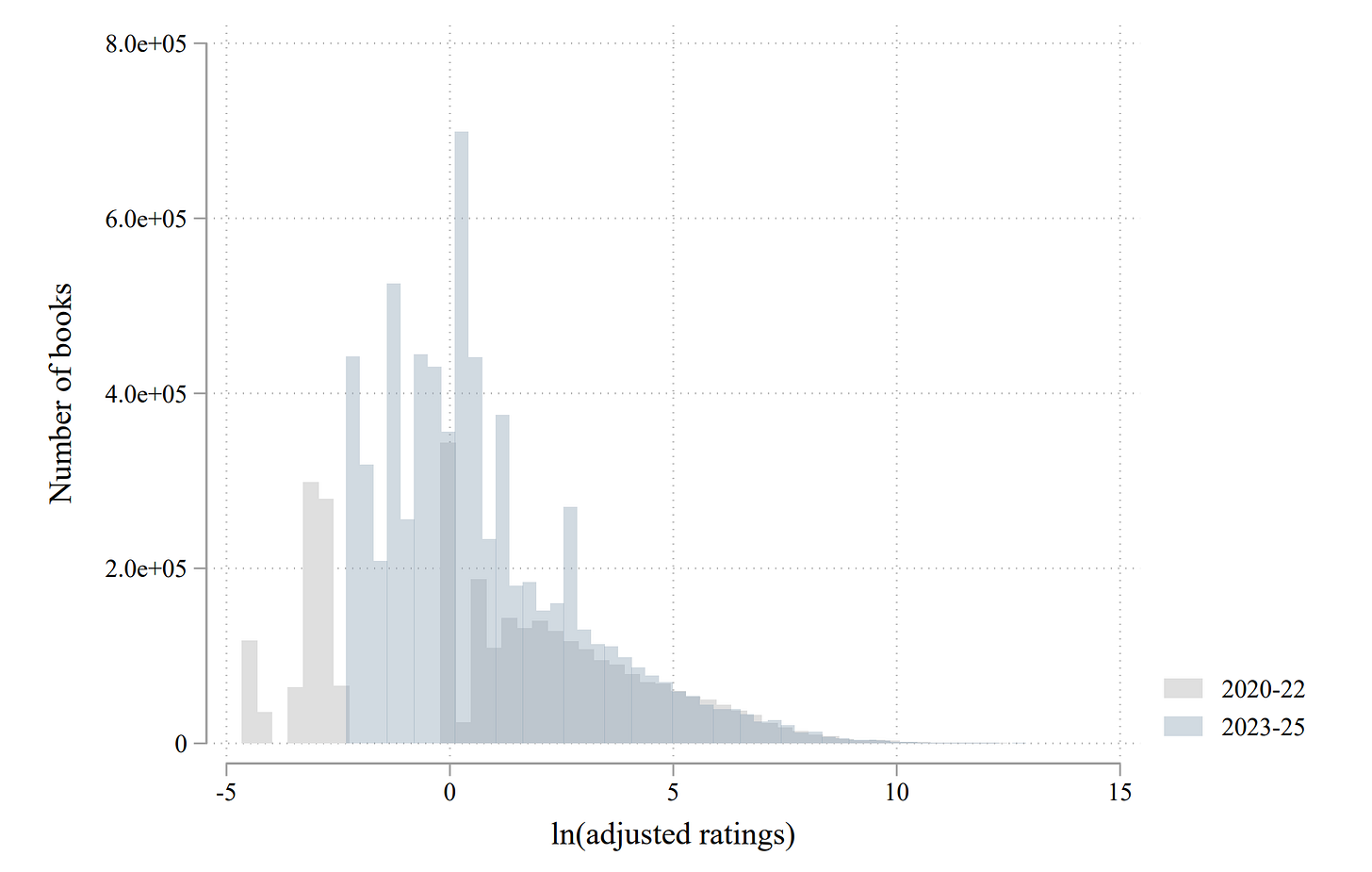

The answer to this question is that in the post-LLM era (which they date as after 2022), at least based on numbers of Amazon ratings, the average book got worse. E.g., you occasionally hear hype of the AI-assisted author mass-producing books, and yet, investigation usually reveals that they have sold more like zero books and that the story is a scam. The actual effects of LLMs on publishing were that: (a) the average book got worse, (b) the top 1,000 books in each category improved somewhat, and (c) the top 100 books in each category didn’t change in quality.

How to read: Books with high reader ratings are on the right side of the x-axis here. So you can see that 2023-2025 books are mostly slop and the best don’t change much. Also, this may be hyping up the effects of AI for various reasons, e.g., the researchers do adjustments (it is not the case that only 2020-22 books are uniquely so low rated on the left-hand side, that’s an artifact of adjustments) among other issues.

Now, the average book a reader encounters is more likely to be in the top 1,000 than below that, so the average consumer experiences an increase in quality (mostly due, it appears, to more “shots on goal” rather than actual improvements). But this beneficial effect for consumers seems to be driven by often-very-low-quality-anyways categories like “Travel” and “Outdoors,” rather than, say, “Science” and “Literature.”

So do these effects look like a new source of surplus alien intelligence? Or does it look like tool use? Consider that the authors who were already successful pre-LLMs had the most efficiency gains, supporting the “tools” theory; meanwhile, new post-LLM era debut authors produced much worse work.

After failing to automate publishing books and writing in general, the claim is now that AI will go on to automate science, math, all of academia, and finally humanity itself?

But why would the chart for scientific papers (or anything else) look different from the chart for books above? LLMs can now “write a scientific paper” or “write a mathematical paper” in the exact same sense that they’ve been able to “write a book” or “write a short story” or “write an essay” for several years, all to some effect, but overall the results have been objectively mediocre given the hype, and the world is somewhat stupider, rather than smarter, at least on average.

WELCOME TO WRITER HELL, MATHEMATICIANS

Looking into the crystal ball that the last half-decade represents for writers reveals that, more likely than superintelligence, we are going to enter a world of immense, overwhelming, scientific and philosophical and mathematical slop.

Will there be some good outcomes as well? Yes! Just as there have been for writing. I am not entirely an AI pessimist. I am an AI realist—there are indeed positives to the technology, and I’m trying to find them myself (like for research, or, e.g., my attempt at making the Madeline image better above, or the fewer spelling mistakes I make now, or sometimes I ask LLMs to double-check something, etc.) Yes, some B-tier bloggers have suddenly and mysteriously transformed into A-tier bloggers, who all kind of sound the same in their A-tier-ness. Previously A-tier bloggers have gotten a lot less use from the technology, and are not noticeably better than they were years ago.

LLMs have broadly failed to automate text generation in general for the precise reasons I laid out all the way back in my 2022 essay “AI Art Isn’t Art.”

They struggle because they are fundamentally imitators, and when not told who or what to mimic they are intellectually shallow. You put more bits in, you get better bits out. Fine. That’s a tool. A computer or a piano is like that too. This is not something that will trigger a singularity of self-improvement or take the jobs of all of humanity or create “machines of loving grace” or any of the stuff people are now regularly promising is literally going to happen in like… a year. E.g., humans can edit their text, making the block of marble look ever more like the statue inside. When it comes flying solo, first drafts by LLMs are often better than last. LLMs can’t even recursively improve a five paragraph essay, let alone themselves.

So we will experience a long march by AI across intellectual disciplines (most lately, mathematics) where the hype reawakens with each new expansion, and in the wake of the long march some things do change but ultimately the world is not reconfigured as has been promised and the actual experienced intelligence level of the world, especially the top where it matters most, remains mostly unchanged, because it’s still just humans using tools. Bits in, bits out. That’s been the effect of LLMs on text production (in both book publishing and on social media), and it seems very likely to be their effect on almost everything else too. In this conservative view, by 2030, the top 100 math papers of the year won’t look spectacularly different from the top 100 math papers of 2020. The top 1,000 papers? Maybe they’ll be marginally improved, like with books or blogs. And at the backend, the entire field of mathematics will be buried, absolutely buried, in slop. Companies will continually say their systems accomplish things “autonomously” but that word is hard to define for LLMs, where a prompt is an injection of human intelligence, and a scaffold too is an injection of human intelligence (but the advantage of scaffolds is that you can put a ton of domain-specific knowledge and tips and tricks and guides in the scaffolds, keep them private because it’s proprietary, and then say the models “solved it autonomously!”).

At some point you have to use your capacity as Homo faber and call it: LLMs have behaved precisely as we would expect tools to behave when it comes to changing the nature of first-impacted and frontline intellectual disciplines like writing. The best users gain efficiencies and expand, to some degree, their capability range, especially for the mid-list of intellectual output. The worst users flood the zone.

So at least when it comes to the near-term future and the foreseeable scaling of the current technology, “merely” the exact same thing that has happened to writing will happen to every subject on Earth. But that’s not replacing humanity. That’s not the singularity. You’re just confused about what we are. We are Homo faber, and we have been doing this for 3.3 million years, and our rocks have gotten very complex—so complex you’re forgiven for not thinking they’re rocks.